Table of Contents

Start your free trial.

Start your free trial.

Start your free trial.

Table of Contents

Executive Summary

Kubernetes gave teams a powerful model for building and scaling distributed applications. It also made testing those applications significantly harder.

When your system is a collection of loosely coupled services running across pods, namespaces, and clusters, the question isn't just "does this code work?" It's "does this code work when it's deployed alongside 40 other services, behind a service mesh, with network policies that didn't exist yesterday?"

This guide walks through the types of tests your Kubernetes microservices actually need, the operational challenges that trip teams up, and the tools worth evaluating in 2025.

What Tests Do Kubernetes Microservices Need?

You're not starting from scratch here. The standard testing categories still apply, but Kubernetes environments add layers that change how (and where) each test type runs.

Unit Tests

Unit tests cover individual functions and components within a single microservice. They run fast, they don't need a cluster, and they belong in your local development loop. Nothing Kubernetes-specific here, but they're the foundation everything else depends on.

Integration Tests

Integration tests verify that your microservice communicates correctly with its dependencies: other services, databases, message queues, external APIs. In Kubernetes, this means testing service discovery, DNS resolution, and cross-namespace communication patterns that don't exist outside the cluster.

End-to-End (E2E) Tests

E2E tests simulate real user workflows across the full application stack. These require a running Kubernetes environment because you're validating the entire system, including ingress routing, config maps, secrets injection, and the interactions between services that only surface when everything is running together.

Performance and Load Tests

Performance tests measure how your application handles traffic under realistic and extreme conditions. In Kubernetes, this includes autoscaler behavior, pod scheduling latency, resource limit boundaries, and cross-node network performance. Running these outside the cluster produces misleading results because the infrastructure characteristics are fundamentally different.

Chaos Engineering

Chaos tests evaluate your application's resilience when things go wrong: pod evictions, node failures, network partitions, DNS outages. Kubernetes clusters experience all of these in production, so testing recovery behavior before your users discover it is the entire point.

Common Challenges of Running Tests in Kubernetes

Most teams discover these problems after they've already committed to a testing approach. The tools themselves are capable. The operational overhead is what breaks you.

Monolithic CI/CD Pipelines Become Bottlenecks

When every test type lives in a single CI/CD pipeline, your integration and deployment speed drops to the pace of your slowest test suite. A 45-minute load test blocking a 3-minute unit test from deploying is a problem that gets worse as your service count grows.

Test Artifact Storage Gets Messy Fast

Every tool generates its own output format: JUnit XML, screenshots, video recordings, performance traces, log files. Without a centralized approach to storing and retrieving these artifacts, debugging a failed test three days later turns into an archaeology project across S3 buckets and ephemeral pod logs.

Knowing When (and Where) to Trigger Each Test

Not every test should run on every commit. Load tests on every pull request burn compute and slow feedback. But running them too rarely means performance regressions hide for weeks. The real challenge is building a trigger strategy that matches each test type to the right event, without making your pipeline configuration unmanageable.

Retriggering a Single Test Requires Rerunning Everything

If your tests live exclusively inside CI/CD jobs, retriggering a flaky E2E test means rerunning the entire pipeline. On a team with 50+ services, this cascading wait time adds up to hours of wasted developer time per week.

Related reading: How to orchestrate tests independently from your CI/CD pipeline can dramatically reduce these bottlenecks.

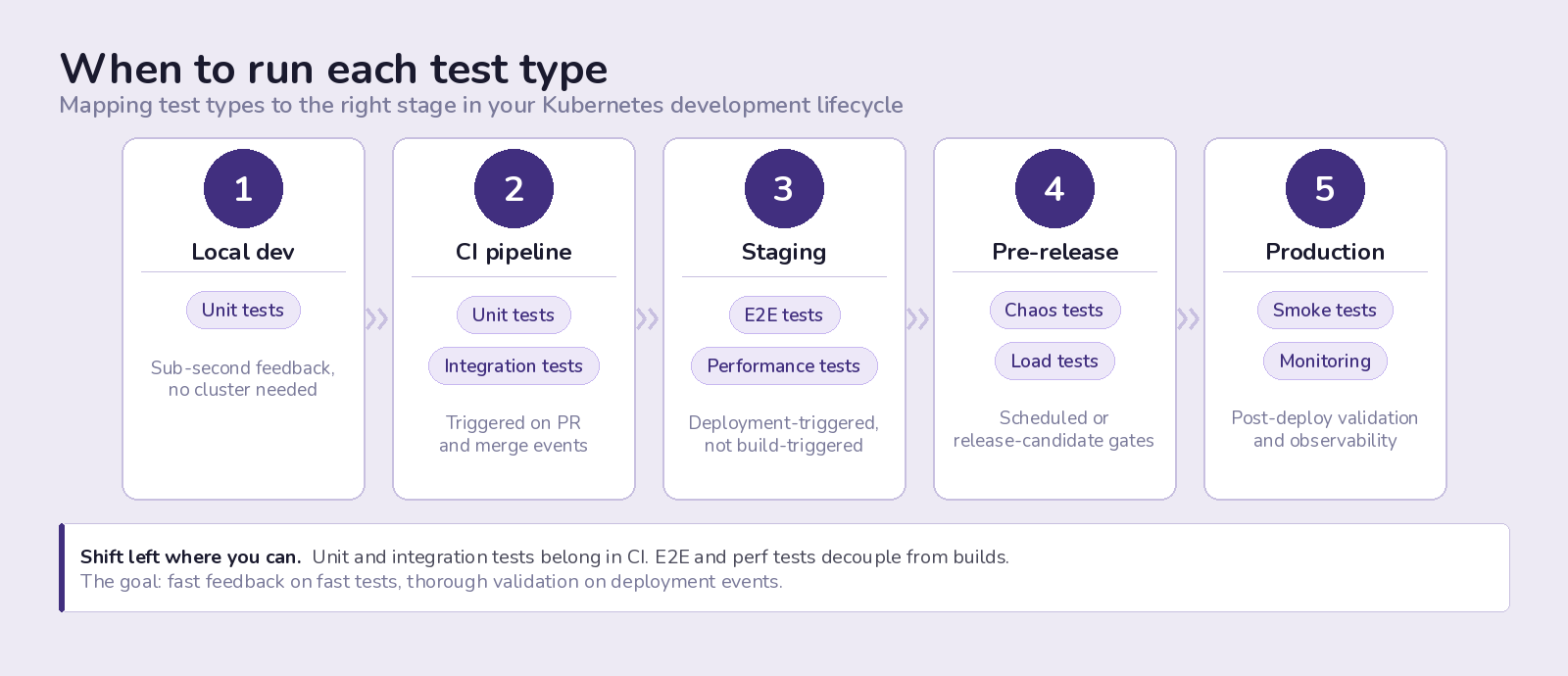

Testing Strategy: Where and When to Run Each Test Type

The right test at the wrong time is almost as bad as no test at all. Here's where each type fits in your development lifecycle.

Unit tests run locally during development and again in your CI pipeline. They provide sub-second feedback and should never block on infrastructure. If your unit tests need a cluster to run, they're not unit tests.

Integration tests belong in the CI pipeline, triggered on pull requests and merges. They should be fast enough (under 5 minutes) that developers don't context-switch while waiting. Design them to use ephemeral test dependencies rather than shared staging databases.

End-to-end tests validate complete user journeys and need a representative environment. Running them in CI is possible but tends to overcomplicate pipeline orchestration. A better pattern is to trigger them on deployment events to staging or pre-production environments, decoupled from the build pipeline itself.

Performance and load tests should run against environments that closely match production infrastructure. Schedule them on release candidates, after staging deployments, or on a regular cadence (nightly or weekly). Running them in CI for every commit is overkill and expensive.

Chaos tests work best as scheduled exercises or as part of pre-release validation. They require a stable baseline to measure against, so running them against a constantly changing development environment produces noise instead of signal.

Best Tools for Testing Microservices in Kubernetes

No single tool covers every testing need. Here's what's worth evaluating across each category, based on Kubernetes-native compatibility and active community support.

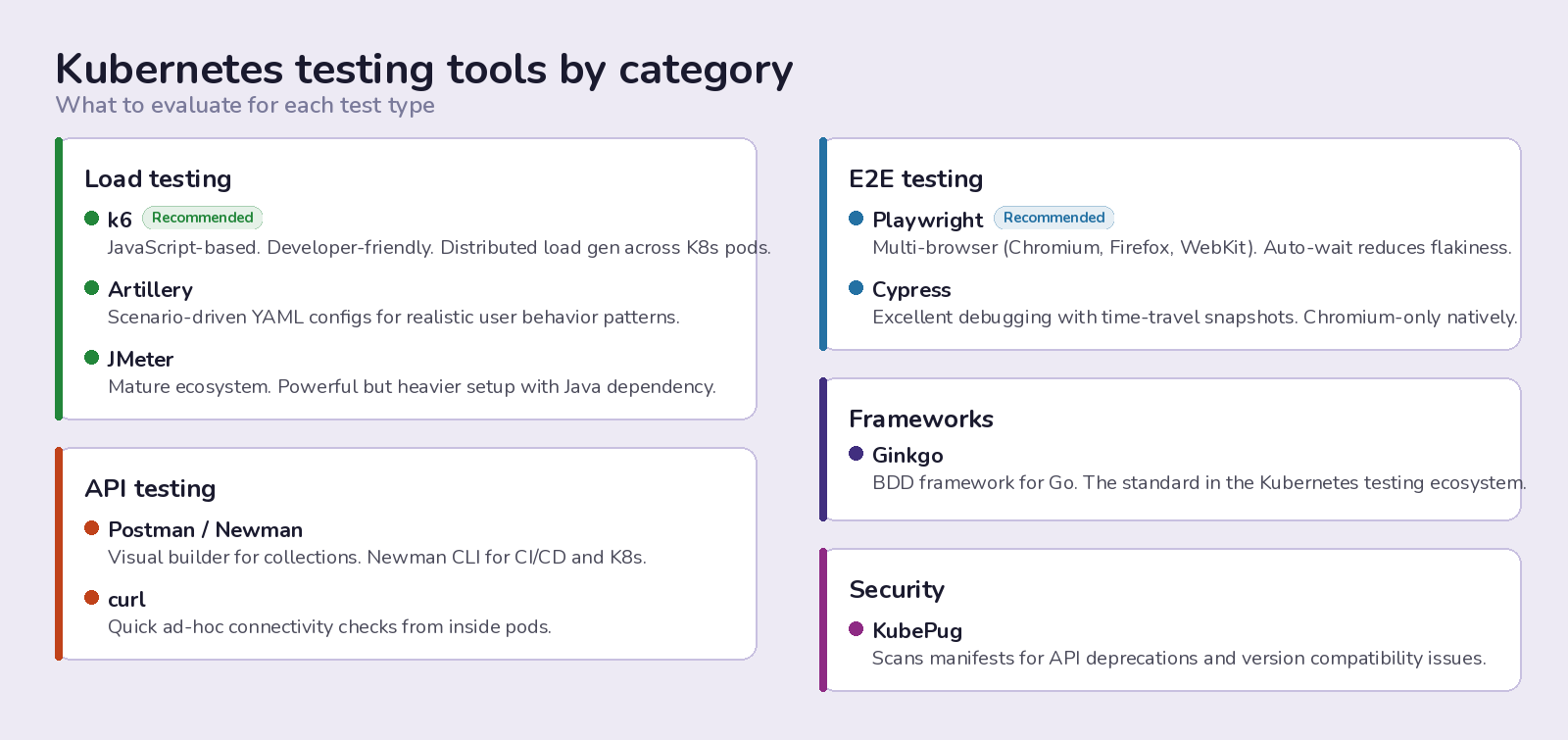

Load Testing

K6 is the most developer-friendly option in this category. Tests are written in JavaScript, it integrates well with CI/CD, and its output is clean enough to act on without a separate analytics layer. It handles distributed load generation across Kubernetes pods when you need to scale beyond a single instance.

Artillery works well for scenario-based load testing where you need to simulate realistic user behavior patterns rather than raw throughput. Its YAML-based test definitions are approachable for teams that don't want to write test code.

JMeter remains a solid choice for teams already invested in its ecosystem, though the XML-based test plans and Java dependency add overhead compared to newer alternatives. It's powerful but not lightweight.

End-to-End Testing

Playwright has become the default recommendation for most teams in 2025. It supports Chromium, Firefox, and WebKit, handles modern web application patterns well, and its auto-waiting logic reduces flakiness compared to older tools. Running Playwright tests in Kubernetes pods requires some container configuration but is well-documented.

Cypress is still widely used and has a strong plugin ecosystem. Its test runner provides excellent debugging capabilities with time-travel snapshots. The trade-off is that it only supports Chromium-based browsers natively.

API Testing

Postman (and its CLI counterpart Newman) works for teams that want a visual interface for building and managing API test collections. Newman runs those collections in CI/CD and Kubernetes environments.

curl is always there when you need quick connectivity checks and ad-hoc API testing from within pods. It's not a testing framework, but it's the fastest path to "is this service reachable?"

Testing Frameworks

Ginkgo (Go) is the standard BDD testing framework in the Kubernetes ecosystem. If your services are written in Go, Ginkgo with Gomega matchers gives you expressive, readable test suites that integrate well with Kubernetes testing patterns.

Security Testing

KubePug checks your Kubernetes manifests against deprecation and removal data for the Kubernetes API versions you're targeting. It catches upgrade-related breaking changes before they hit your cluster.

Related reading: How to run K6 load tests in Kubernetes | Running Playwright tests at scale

The Operational Gap: Why Tools Alone Aren't Enough

Having the right testing tools is one piece of the puzzle. The harder problem is orchestrating them: triggering tests at the right time, routing results to the right people, storing artifacts where they're accessible, and doing all of this without turning your CI/CD pipeline into an unmaintainable monolith.

This is the gap that test orchestration platforms fill. Instead of wiring each tool into your pipeline individually and managing execution, artifact storage, and result aggregation yourself, an orchestration layer handles that infrastructure so your team focuses on writing tests, not managing test infrastructure.

Testkube was built specifically for this problem. It runs tests natively in your Kubernetes clusters, supports any testing framework (K6, Playwright, Cypress, Postman, JMeter, Ginkgo, and more), and gives you a single control plane for execution, scheduling, artifact storage, and results. Tests run where your code runs, with no external SaaS dependencies or networking tunnels to configure.

Key Takeaways

Testing microservices in Kubernetes requires a deliberate strategy that matches each test type to the right stage of your development lifecycle. Unit and integration tests belong in your CI pipeline for fast feedback. E2E tests should be decoupled from builds and triggered on deployment events. Performance and chaos tests need production-like environments and thoughtful scheduling.

The tooling is mature. The operational challenge is orchestrating all of it without creating a pipeline that's more complex than the application it's testing. Teams that solve the orchestration problem, whether through a dedicated platform or disciplined automation, ship faster and catch more bugs before production.

About Testkube

Testkube is the open testing platform for AI-driven engineering teams. It runs tests directly in your Kubernetes clusters, works with any CI/CD system, and supports every testing tool your team uses. By removing CI/CD bottlenecks, Testkube helps teams ship faster with confidence.

Get Started with a trial to see Testkube in action.

.avif)