Table of Contents

Start your free trial.

Start your free trial.

Start your free trial.

Table of Contents

Executive Summary

Two patterns. One clear lesson about what gets left behind when AI enters your development pipeline.

If you are leading an engineering team right now, you are feeling the pull. AI-assisted development promises a step change in velocity. More code, faster, with fewer people blocking the pipeline. Most teams are saying yes.

And the velocity gains are real. I see them constantly. Pull requests are up. Lines of code per PR are up. The output is genuinely impressive.

But something else is quietly going up too: the pressure on your testing infrastructure. And for a lot of teams, testing has not kept pace. What I want to share with you is the pattern I keep seeing, what it costs, and what the teams getting ahead of it are doing differently.

The gap that AI is opening up

When teams start using AI to generate code, they often do not change how they test it. That is the first mistake. And it shows up in ways that are subtle at first.

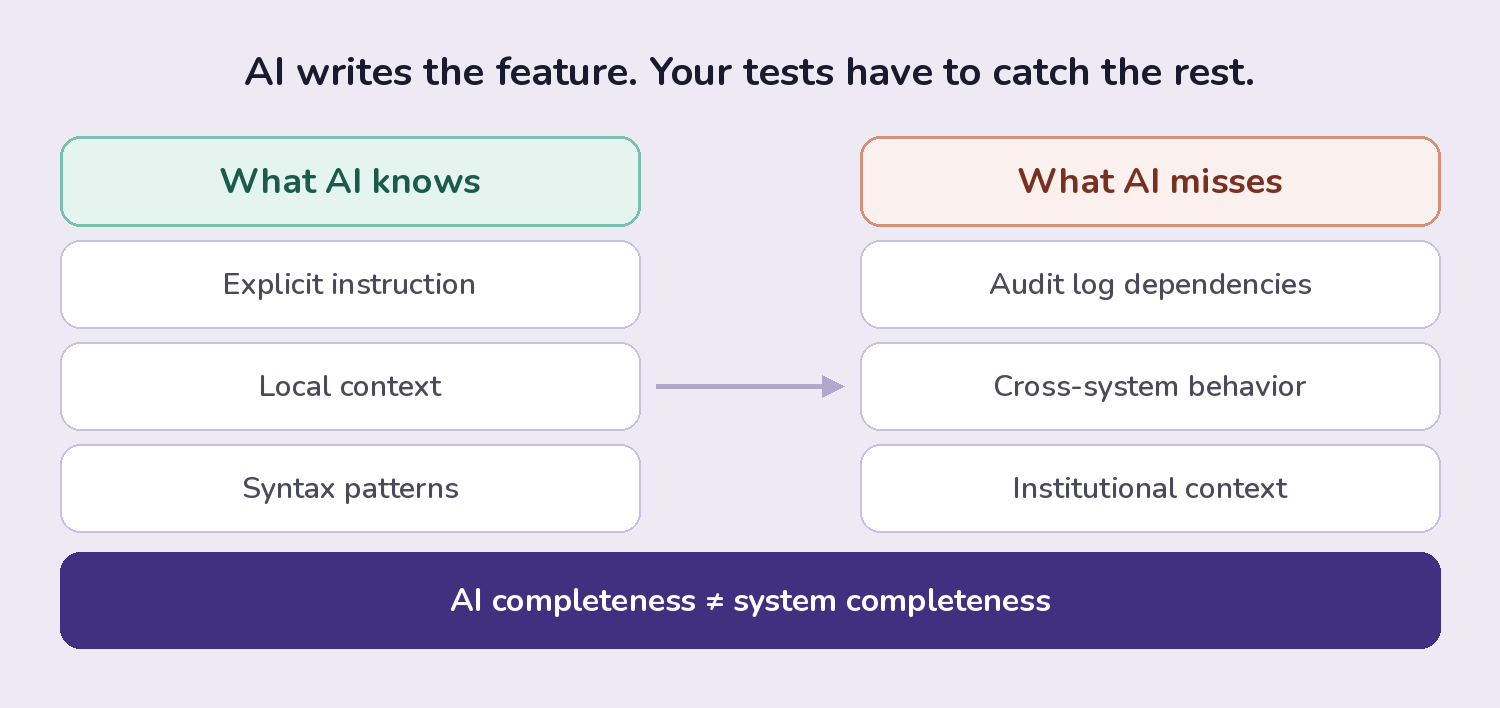

I call it the ripple effect. You ask AI to add a feature, and it does. It wires up the logic, updates the form, and makes it work exactly where you pointed it. What it does not know is the audit log that should capture that action, the API endpoint that needs to expose the new field, or the search filter your customers expect it to appear in. AI only knows what you tell it. It does not carry the institutional knowledge of your most experienced engineer.

Without test coverage that validates behavior across the full system, those gaps slip through. Not as obvious crashes. As quiet inconsistencies that surface in production, usually right after a big push.

There is a second, more operational problem. As AI increases your code output, your test suites face more demand. If you are running more pull requests through infrastructure built for yesterday's volume, build times go from five minutes to twenty. The velocity gains from AI stop reaching your end users because the pipeline cannot keep up.

The first sign is usually bugs in production. Customers complain. Things are not working. And when you trace it back, it is not that AI wrote bad code. It is that AI did not know enough about your system to write complete code.

What teams are actually doing about it

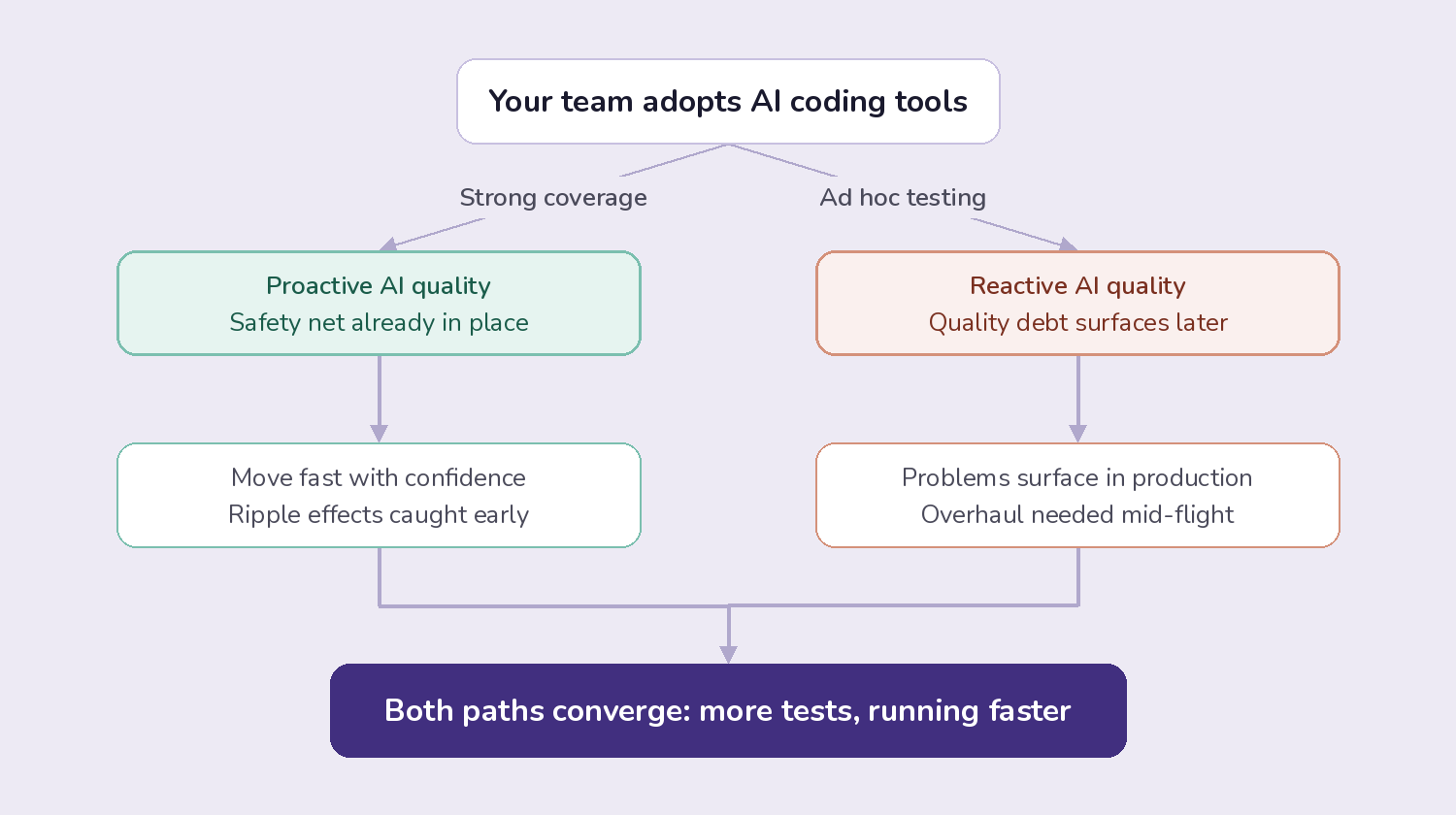

In talking with engineering teams across our customer base, two patterns have emerged. They represent very different bets on how to sequence AI adoption and quality.

Pattern one: AI first, quality catch-up

Some teams went all-in on AI coding tools without changing their testing approach. For a while, it felt like pure upside. Then the signals started appearing: a few more defects per sprint, a support queue that kept growing, pipeline times that stretched longer every week.

This is the slow-burn pattern. One or two extra bugs feel like noise. But when AI becomes the dominant way your team ships and the support queue keeps climbing, you reach an inflection point: the velocity gains are no longer worth the quality costs. At that point you are not just dealing with a quality problem. You are dealing with a testing infrastructure problem that has to be fixed urgently, mid-flight, while you are still shipping.

Pattern two: Quality foundation first

Other teams made a different call. They looked at the AI wave coming and decided they were not ready to let it in until their testing infrastructure could absorb what it would generate. They paused, built the foundation, and planned the rollout of coding agents for later in their roadmap.

The instinct behind this approach is sound. And I hear a version of it explained the same way by teams who have been through the harder lesson of pattern one.

These teams are not leaving velocity on the table. They are sequencing to capture it fully, and without the scramble.

It is not AI-first vs. quality-first

Here is where I want to push back on how this conversation usually goes. The framing of AI-first versus quality-first is too simple, and I think it sends people in the wrong direction.

AI-first can also mean quality-first. They are not opposites. The real distinction is whether your quality approach is proactive or reactive.

If your team already has strong end-to-end test coverage and mature testing infrastructure, you can move AI-first with confidence. The safety net is already there. You will catch the ripple effects. You will know when something breaks across the system.

If you already have very high end-to-end coverage and a good testing infrastructure in place, you can do more AI-first because you have the safety net. If you do not have any of that, you should probably get it in place first.

If you do not have that foundation, then adopting AI coding tools is borrowing against quality debt you have not yet paid down. The problems will surface. It is a matter of when, not if.

There is also a trap worth naming directly. Some teams assume AI-generated code is cleaner and, therefore, needs less testing. It does not. AI produces syntactically correct code. It does not produce contextually complete code. Just because your coverage numbers look fine does not mean your application behaves the way your users expect. Your users do not care whether a human or an AI wrote the code that broke their workflow.

The teams that struggle are not always moving too fast. They are moving without first asking what faster code output actually means for their quality pipeline.

Both paths lead to the same place

Here is what proactive and reactive teams have in common: both paths lead to more tests. Whether you are catching up after a quality crisis or building ahead of an AI rollout, the destination is the same. More tests, running more frequently, in a pipeline that has to stay fast even as the volume grows.

Either approach leads to the same outcome: more tests. You want to increase velocity by AI, so suddenly you are creating 50% more pull requests per week. Each of those requires test execution. That is where Testkube fits in.

Testkube is a test orchestration platform built for cloud-native teams. It is designed to run any test, at any scale, inside a containerized environment. That matters because the bottleneck is almost never whether your tests exist. It is whether you can execute them fast enough to keep pace with the code being generated.

For teams in reactive mode, Testkube starts showing value from the first execution: a single view across all your test activity, AI-powered failure analysis that shortens troubleshooting time, and parallelized execution that keeps pace with higher pull request volume.

For teams building proactively, Testkube becomes the platform everything else grows on. When your coding agents come online and pull requests multiply, the execution layer is already in place and already battle-tested. You are not scrambling. You are ready.

Testkube also decouples testing from your CI/CD pipelines entirely. Test execution scales independently of your build infrastructure, which is exactly the capability you need when AI starts pushing code volume into territory your current setup was never built for.

Is your testing infrastructure ready for what is coming?

The question I would encourage you to sit with is not which approach is right. It is whether your current testing infrastructure is ready for the AI wave when it hits full force on your team.

Looking a couple of years ahead, I think we will see fleets of AI agents writing code, generating tests, running them, and troubleshooting failures. Quality engineers will not be writing Selenium selectors. They will be one level up in the abstraction stack, managing agent context, setting requirements, and providing oversight. The same kind of shift we saw when developers moved from assembly to high-level languages.

That future is coming faster than most teams expect. The teams positioned to benefit from it are the ones building the orchestration layer now, not as a defensive move, but as the foundation for velocity that actually holds.

Do not underestimate the impact of testing when you start adopting AI. Think about how your testing pipelines need to adapt as you generate more code. Are they ready to run more tests? How are you hedging for ripple effects across your system?

The teams getting ahead of this are not moving slower. They are setting up so they can move faster, and trust what they ship.

.webp)

.webp)

About Testkube

Testkube is the open testing platform for AI-driven engineering teams. It runs tests directly in your Kubernetes clusters, works with any CI/CD system, and supports every testing tool your team uses. By removing CI/CD bottlenecks, Testkube helps teams ship faster with confidence.

Get Started with a trial to see Testkube in action.