Table of Contents

Start your free trial.

Table of Contents

Executive Summary

Embrace Kubernetes to Improve Performance, Scalability, and Ease of Configuration for your Tests

Testing applications is becoming increasingly difficult as they grow in size and complexity. For someone responsible for testing an application of that scale, simply running tests within individual containers doesn’t help much. Hence, there is a need for a scalable and flexible approach that allows for more efficient and effective testing to catch those pesky bugs.

One technique that gained popularity for testing distributed applications was using Docker-in-Docker or DinD. The idea was to run a Docker daemon inside a Docker container itself and then use that inner Docker environment to orchestrate the full application stack on a single machine.

Initially, it provided a seemingly straightforward way to create realistic multi-container environments for integration testing. However, as the applications grew, teams realized their substantial drawbacks and limitations, from resource constraints and overheads to inconsistencies.

If you’re responsible for testing in your organization, you can relate to the above scenario very well. Testing in Docker in Docker comes with its issues, and for large applications, it can become a nightmare. In this blog post, we’ll look at the challenges with Docker in Docker approach and why you should embrace Kubernetes for Testing.

Docker in Docker 101 (DinD)

But before we further delve into this blog post, a short primer on Docker in Docker (DinD) is necessary. As the name suggests, it’s running a docker container inside a docker container. The approach first surfaced in late 2013, and it soon gained popularity.

One common use case of DinD is continuous integration (CI) pipelines. The CI system could start up a DinD container as part of the build process. This inner Docker is then used to pull application images, start their containers, and run tests within that isolated environment.

While DinD can be convenient for certain workflows as mentioned above, it introduces a range of complexities and limitations. Let us discuss them in the following section.

Problems with Running Docker In Docker

Docker-in-Docker seemed like a convenient solution for testing distributed applications initially, but the reality is that this approach has significant drawbacks that become more evident as your applications grow in complexity. From a testing standpoint, DinD introduces several challenges that can undermine the very goals you're trying to achieve.

Inefficient Performance

One of the major drawbacks of the DinD approach is its sub-optimal or inefficient performance, especially at scale. The DinD testing approach hogs your resources if not managed correctly. Because each docker daemon reserves a set of resources, including CPU, RAM, networking, etc, on a system with limited resources, this overhead can quickly lead to performance bottlenecks and instability while testing.

Inability to Mirror Production Setup

Testing an application in a production-like environment is vital. However, the DinD environment fails to accurately mimic your actual production setup. The nested container environment may exhibit different behavior due to different storage, networking, and security configurations that may not be similar to your production environment. For instance, your production containers may run on a different Docker runtime (containerd, crio etc) compared to what DinD provides by default.

Docker Security Concerns

When you have applications dealing with sensitive data, you need to be extremely cautious when testing with the DinD approach, as it can open up new vulnerabilities and security risks if not configured properly. By default, the Docker daemon runs with root privileges, if not configured correctly, it could expose your application data or increase security loopholes. Furthermore, concerning multi-cluster/tenant setup, there are security concerns of isolation between the different nested Docker instances, potentially allowing cross-tenant data leaks and vulnerabilities.

Docker Scalability Concerns

With DinD, you end up with limited resources available on a single host machine. Even if multiple DinD instances are utilized, efficiently utilizing resources and automating scaling becomes a difficult process without an orchestrator. The lack of out-of-the-box scaling options severely limits the ability to conduct rigorous integration, load, and performance tests. Teams struggle to accurately model real-world scale, resiliency scenarios, and sophisticated deployment requirements.

From performance overheads to security and scaling concerns, the technical debt of using DinD stacks up rapidly, and continued usage of this approach may lead to maintenance overhead. However, the major concern is that DinD severely restricts your ability to conduct comprehensive integration, load, and other performance tests on a large scale.

Even the creator of DinD asks you to think twice before using DinD for your CI or testing environment.

Why Embrace Kubernetes For Testing?

As testing needs evolve, the Docker in Docker testing approach fails to provide the scalability, flexibility, and resilience that modern distributed systems demand. This realization led us to explore Kubernetes as a more robust solution for modeling and testing our cloud-native applications. Let us look at how embracing Kubernetes for Testing overcomes the issues with DinD.

Built-in Scalability

Kubernetes comes with built-in orchestration capabilities that enable on-demand scaling, which is impossible with the DinD approach. It provides mechanisms like horizontal pod autoscaling, cluster autoscaling, and the ability to automatically schedule containers across a pool of nodes. This allows you to model realistic load scenarios by scaling application components using simple commands or auto-scaling rules based on CPU/memory consumption.

Consistent Production-Mirrored Environments

The core goal of any testing process is to ensure testing takes place in an environment that closely resembles the production environment. Today, Kubernetes is dubbed the OS of the cloud and has become a standard for deploying and managing applications on the cloud. This allows your test clusters to accurately mirror your production environment, leading to effective testing.

Simplified and Efficient

Kubernetes is known to be a complex tool to master and involves a steep learning curve at the start. However, as you progress through the Kubernetes journey, things become simpler. The rise of cloud-native CI/CD solutions built specifically for Kubernetes has further contributed to standardizing testing workflows. Instead of building custom pipelines, teams can leverage pre-built integrations for spinning up test clusters, running test suites within live environments, collecting telemetry data, and more.

These were just a few reasons why we should embrace Kubernetes for testing. It brings in a lot of advantages that make your testing processes more efficient. However, one of the challenges when it comes to testing in Kubernetes is the lack of Kubernetes native testing tools and frameworks, which limits your ability to take full advantage of Kubernetes. This is where Testkube comes in.

Run Your Tests in Kubernetes With Testkube

Being the only Kubernetes native test execution and orchestration framework, Testkube takes your testing efforts to the next level. It helps you to build and optimize your test workflows for Kubernetes. It has a suite of advantages, some of which are listed below:

- You can plug in any testing tool to Testkube, make it Kubernetes-native and take full advantage of Kubernetes. Check the complete list of Testkube integrations.

- With Testkube you can easily create and manage complex test scenarios across multiple environments.

- Testkube integrates with a suite of CI/CD tools to enhance your DevOps pipelines by enabling complete end-to-end testing of your applications.

Moving your testing framework from DinD to Kubernetes might seem intimidating, but with Testkube, it's a breeze. As mentioned earlier, Testkube offers a suite of features along with tools and integrations that streamline test deployment, execution and monitoring within Kubernetes ensuring your tests are as close to the production environment as possible.

Using Testkube Test Workflows

Testkube allows you to create end-to-end test workflows using your favorite testing tool. It also streamlines your testing process by decoupling test execution from your CI pipeline. This democratizes the testing process, allowing teams to execute tests on-demand without disrupting the CI workflow. By separating test execution from the CI pipeline, Testkube offers greater flexibility and control over the testing process.

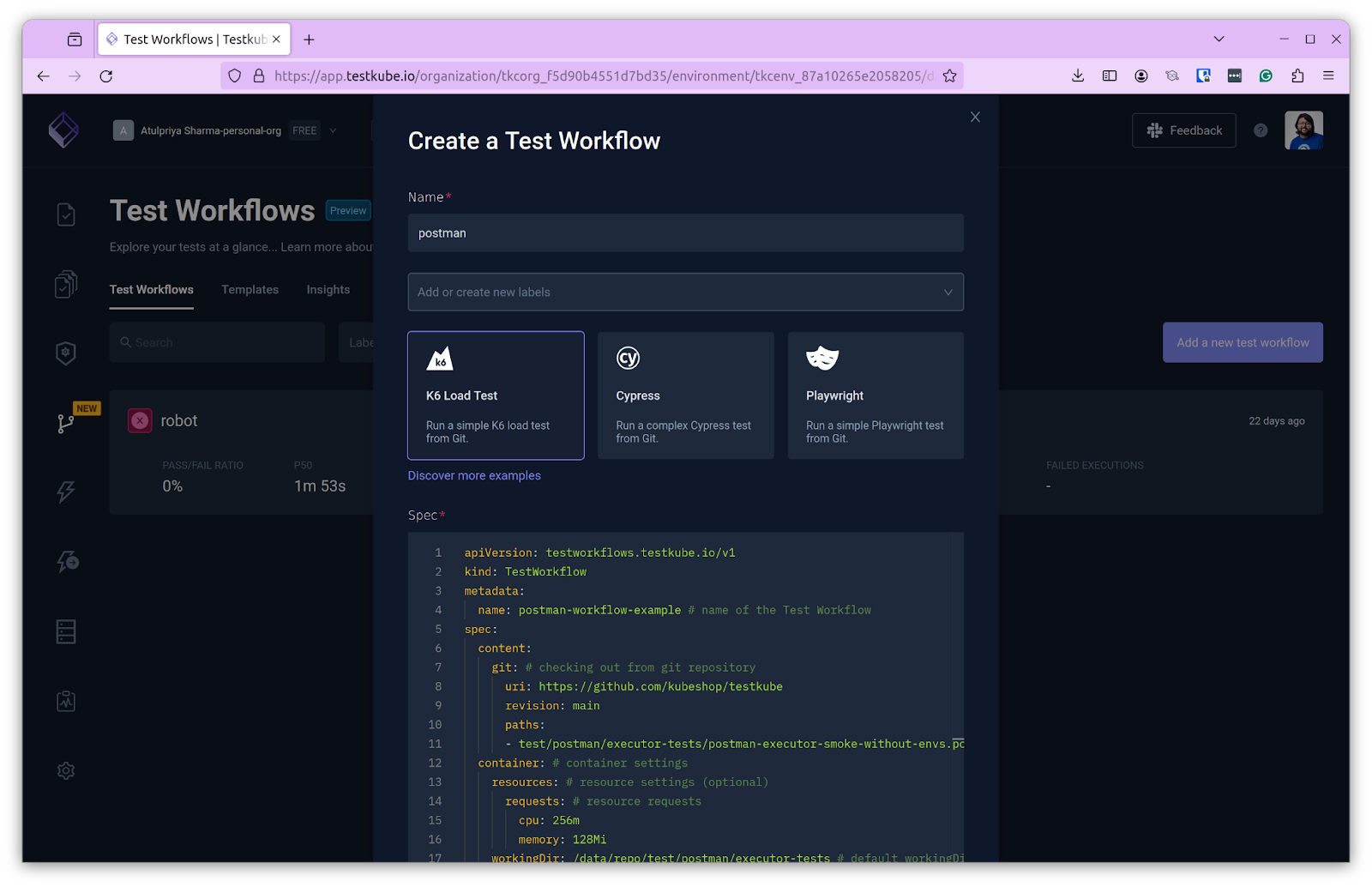

In this section, we’ll examine how to create a test workflow using Testkube. We’ll create one for Postman, but you can configure a workflow for virtually any testing tool.

Creating A Test Workflow Using Postman

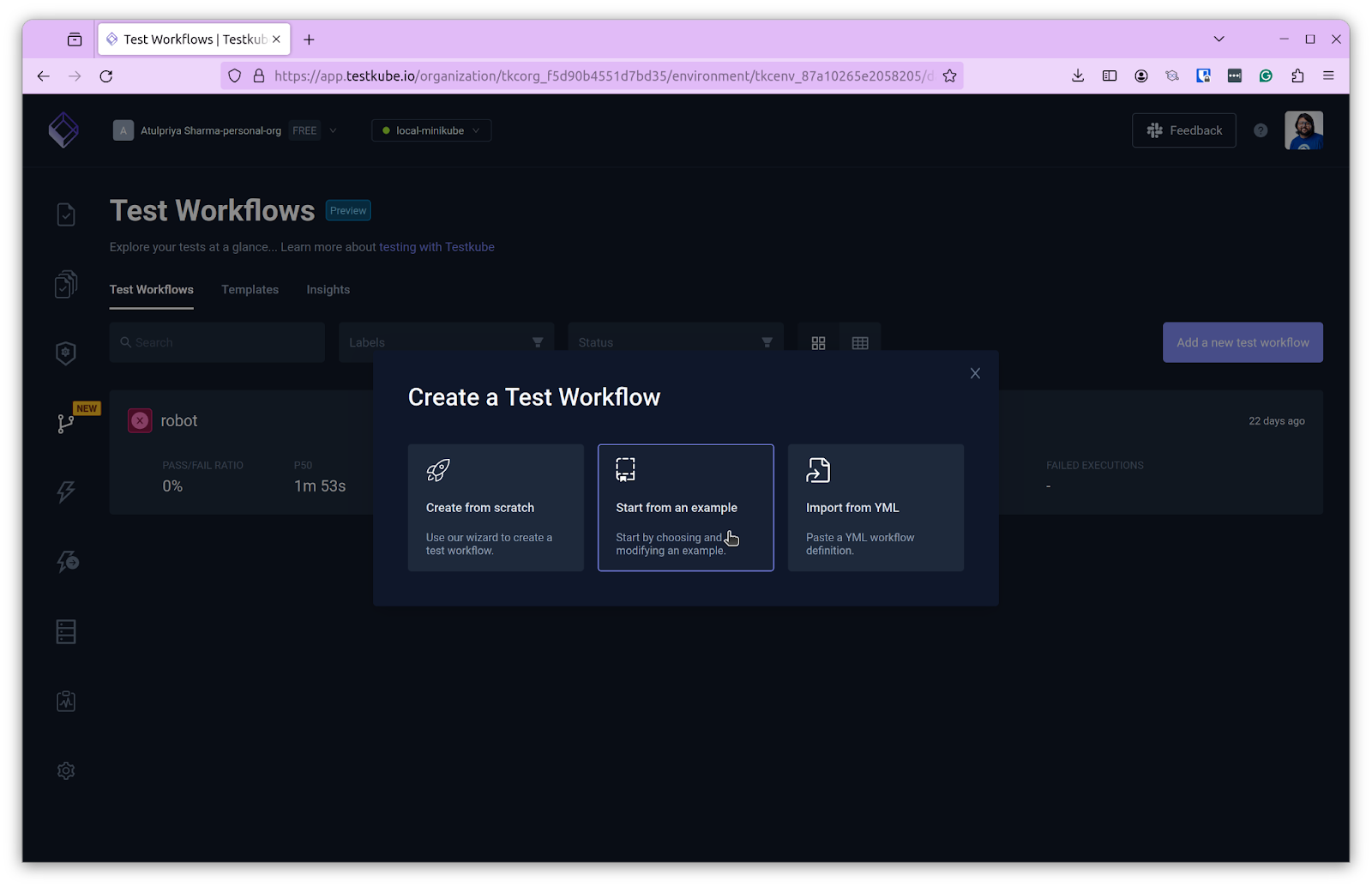

In order to create a test workflow, you need to have a cluster running along with the Testkube agent. When you have this running, you can log in to the Testkube dashboard, click on Test Workflows and “Add A New Test Workflow” and choose “Start From An Example”

By default, a k6 test spec is provided. We’ll be using Postman instead. To do so, click on the “Discover more examples” link, which will take you to the Test Workflows document. Scroll down to the Postman section and copy the code present there. You can also use the one shown below:

Paste this in the spec section and click Create. This will create the test workflow and take you to the workflow page.

Executing a Test Workflow

Click on “Run Now” to start the workflow execution. Clicking on the respective execution will show you the logs, artifacts, and the underlying workflow file.

Testkube Workflows preserve the logs and artifacts generated from the tests, thus providing better observability. Creating a test workflow is fairly straightforward, as shown above, and you can bring your testing tool and create a workflow for it with a few clicks.

Summary

The move from Docker in Docker to Kubernetes for testing is the need of the hour, especially considering the scale and complexity of our applications. By leveraging Kubernetes for testing, we take advantage of its inherent capabilities of scaling, security and efficiency to name a few.

And with tools like Testkube, organizations can achieve more reliable, efficient, and cost-effective testing processes. If you are ready to elevate your testing strategy with Kubernetes and Testkube feel free to visit our website to learn more about Testkube's capabilities and how it can transform your testing workflow.

About Testkube

Testkube is the open testing platform for AI-driven engineering teams. It runs tests directly in your Kubernetes clusters, works with any CI/CD system, and supports every testing tool your team uses. By removing CI/CD bottlenecks, Testkube helps teams ship faster with confidence.

Get Started with a trial to see Testkube in action.