Table of Contents

Start your free trial.

Start your free trial.

Start your free trial.

Table of Contents

Executive Summary

Kubernetes revolutionized the way we looked at infrastructure provisioning. You just tell it what you need, it figures out the how - Need more compute? Increased storage demands? Network surges? - Kubernetes handles everything, everything scales except testing.

While the way we build and deploy apps have changed, testing infrastructure has largely remained the same and hasn’t kept up with the deployment infrastructure. Kubernetes enables elastic, event-driven workloads, testing remains locked to CI/CD pipelines - sequential and resource constrained. Teams share the same pipeline runners. Integration tests wait on infrastructure reconciliation. Performance tests run only on release branches because the pipeline can't afford them elsewhere.

Organizations try to solve this issue by adding more runners, optimizing test suites, or parallelizing within pipelines. But these address symptoms, not the root cause. The problem isn't pipeline efficiency - it's pipeline dependency.

Decoupled testing treats testing as infrastructure, not a pipeline step. Tests run as Kubernetes workloads, triggered by events anywhere in the system, scaled independently of CI/CD capacity.

In this post, we look at why pipeline-bound testing breaks at scale, what decoupled testing architecture looks like, and how it serves as the foundation for modern continuous quality in Kubernetes environments.

The Pipeline Bottleneck: Why Coupled Testing Breaks at Scale

Pipeline bound testing creates bottleneck at scale due to practical and structural reasons. The structural constraints are inherent to how pipelines work and the symptoms are what teams experience daily when those constraints compound.

Let us look at both the constraints and the symptoms to understand the issue better.

Structural Constraints

- Pipeline Serialization: CI/CD pipelines are inherently sequential. Stage A completes, stage B begins. Tests wait for builds to finish. Deployments wait for tests to complete. Test parallelization within stages helps, but doesn't eliminate stage-to-stage serialization. This architectural choice made sense when teams deployed weekly; it becomes a bottleneck when deploying multiple times daily.

- Infrastructure Dependencies: Pipeline-coupled tests depend on two separate state systems: the pipeline execution state (which stage, which step, what artifacts) and the Kubernetes cluster state (pod readiness, service availability, endpoint health). These systems reconcile on different timelines. The pipeline doesn't wait for cluster convergence; it proceeds on its own schedule, creating a timing mismatch which leads to flaky tests.

- Linear Resource Scaling: Adding pipeline capacity means adding more runners. More runners = proportionally more cost. This contrasts with Kubernetes workload scaling, which leverages shared resources, bin-packing, and elastic autoscaling. Testing infrastructure can't benefit from the same efficiency gains that make Kubernetes cost-effective for application workloads.

The above structural constraints lead to operation inefficiencies listed below:

- Shared Resource Contention: Multiple teams compete for the same pipeline runners creating a queue. A hotfix waits behind a feature branch's full test suite. Teams begin skipping tests to avoid delays, accepting quality risk for velocity.

- Infrastructure Timing Flakiness: Tests fail intermittently because pipelines reach test stages before Kubernetes clusters fully reconcile. Pods aren't ready. Services resolve but backends aren't healthy. These aren't code related bugs, but they arise due to the state mismatch between pipelines and infrastructure.

- Test Result Fragmentation: Test outcomes scatter across systems - CI tools, monitoring platforms, security scanners, cluster logs. Unified visibility doesn't exist. Correlating a test failure with the Kubernetes event that caused it requires manual intervention across disconnected data sources.

- Full-Suite Re-Runs: A single flaky test requires re-executing the entire pipeline. No selective re-runs for specific test subsets. Prior run artifacts contaminate subsequent executions. Developers waste hours re-running hundreds of passing tests to isolate one unstable test.

These were some of the constraints and symptoms that highlight why testing coupled to pipelines cannot scale the way Kubernetes-native workloads do.

Decoupled Testing: The Architectural Shift

The solution to pipeline bottlenecks that we discussed in the prior sections isn’t optimizing the pipelines - it’s removing the dependencies. Decoupled testing treats tests as infrastructure workloads rather than pipeline steps- enabling same elastic, event-driven execution model that makes Kubernetes effective for apps.

What Decoupling Means

The idea is simple - separate test execution from CI/CD pipeline lifecycle. Tests don’t need to wait for pipeline stages to finish or wait for a runner to be available. Instead, they execute as independent Kubernetes workloads - pods and jobs that can run anywhere in the cluster and are triggered by any event and scaled based on demand.

Tests can still be triggered by pipelines, but they aren’t managed by them. Pipeline execution doesn’t gate test execution. Test resource allocation doesn’t compete with build jobs and results aggregate centrally regardless of trigger source.

Just like Kubernetes changed application deployment - abstracting apps into workloads that run anywhere in a cluster - decoupled testing converts tests into workloads with independent lifecycle management.

Testing as Infrastructure

Adopting Kubernetes-native patterns is necessary for implementing testing infrastructure.

- Tests as Custom Resources: Tests are defined as Kubernetes CRDs, stored in Git alongside application manifests. They are version-controlled, code-reviewed, and GitOps-compatible.

- Workload Execution Model: Each test execution runs as a Kubernetes Job or Pod. Follows all the standard Kubernetes scheduling constructs like Resource requests, limits, affinity rules. Tests benefit from the same bin-packing, resource sharing, and elastic scaling as application workloads.

- Event-Driven Orchestration: Tests trigger from Kubernetes events (deployments, config changes), external webhooks, schedules, API calls, or manual invocations. Multi-source triggering enables your testing to respond to system changes and not just code commits.

- Independent Scaling: Test capacity scales with cluster capacity. Need to run 1000 load test workers? Kubernetes schedules them across available nodes. No pipeline runner bottlenecks, no cost scaling.

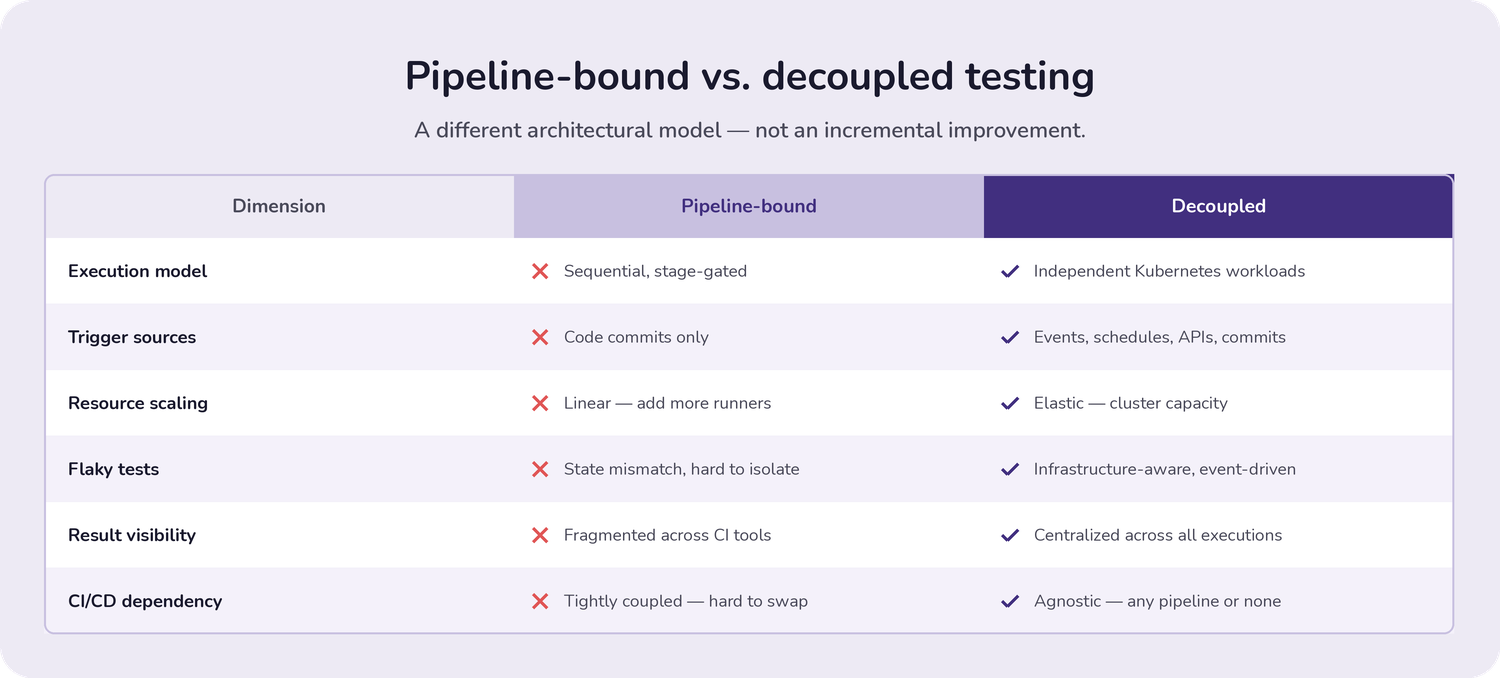

Pipeline-Bound vs Decoupled Testing Comparison

This isn't an incremental improvement - it's a different architectural model. One locks testing to pipeline constraints. The other gives testing the same flexibility, scalability, and event-driven execution that makes Kubernetes effective for applications.

How Testkube Enables Decoupled Testing

We’ve understood that decoupled testing needs a different architectural model that separates the operational and execution tooling. Testkube provides the platform that makes this practical - running tests as Kubernetes-native workloads while maintaining observability across all executions.

Here are a few ways by which Testkube enables decoupled testing

Kubernetes-native Test Orchestration

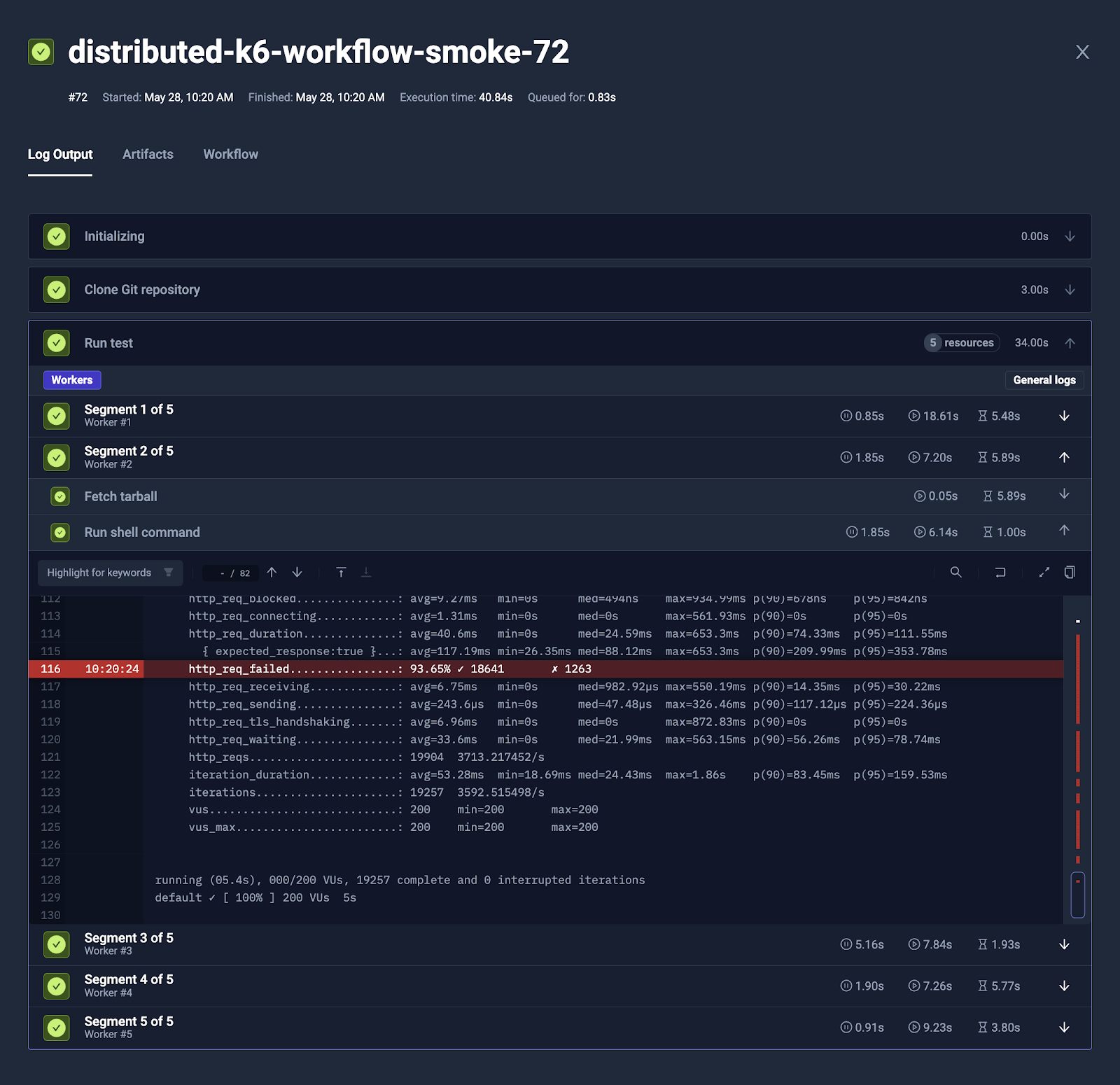

Testkube defines test executions asTest Workflows - these are Kubernetes Custom Resource Definitions that can be stored in Git. Each test execution runs as a Kubernetes job or a pod with independent resource allocation. This approach means that tests benefit from capabilities of Kubernetes - resources requests and limits, node affinity rules, scaling and automatic cleanup.

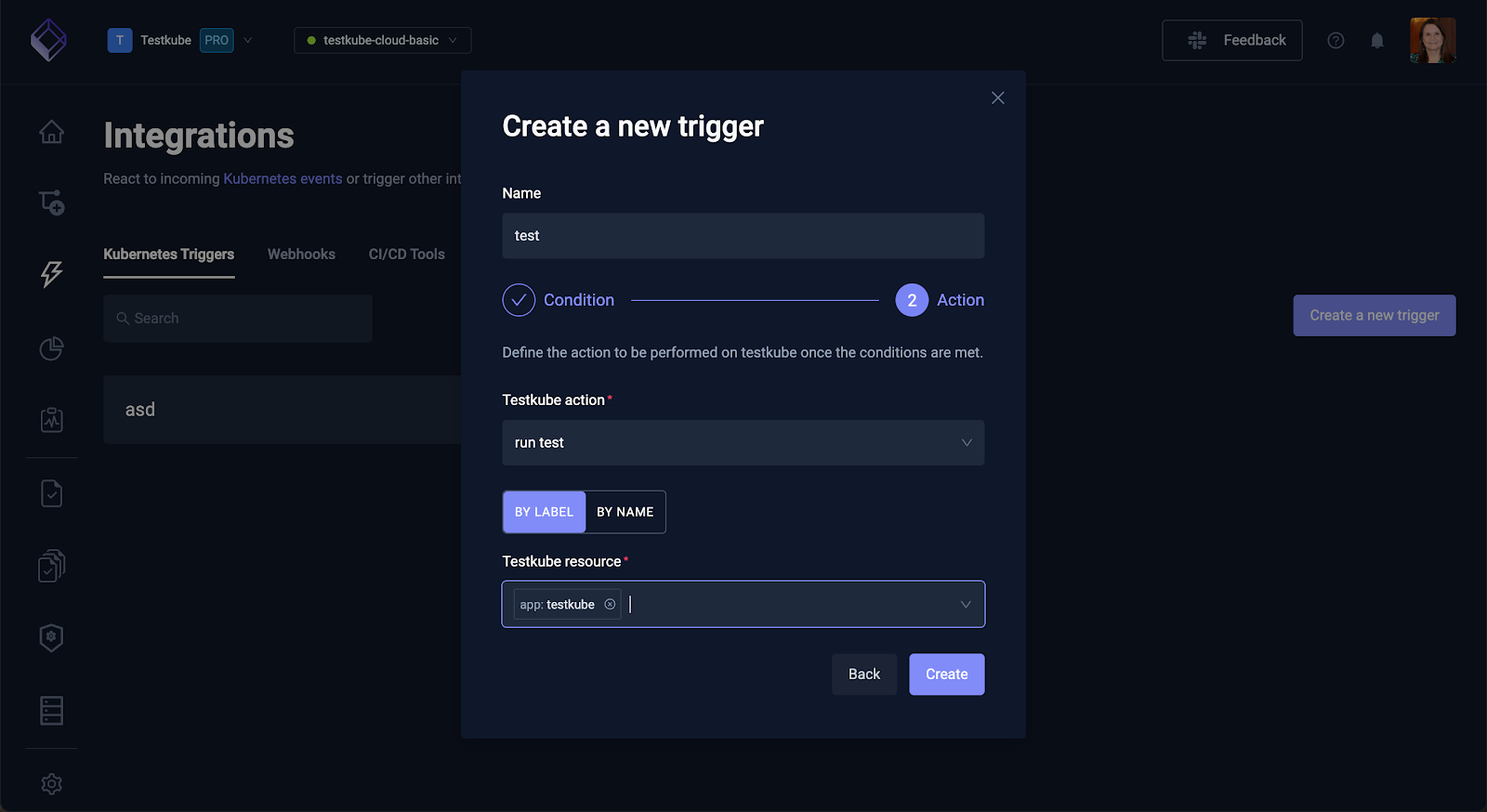

Event-Driven Execution with Event Triggers

Testkube allows you to execute Test Workflows using event triggers. These triggers listen for Kubernetes events like deployment updates, changes to ConfigMaps or scaling or services and automatically execute tests in response. Event Triggers can execute a test workflow without any manual intervention and without waiting for the next code commit.

Multi-source Trigger Support

Test Workflows can also be triggered from multiple sources including GitHub Actions, GitLab CI, Kubernetes events, cron schedules, API calls or manual execution through the dashboard. This flexibility means that CI/CD pipelines can trigger tests without actually managing their execution. Pipelines can remain light weight and just call the Testkube API and let Testkube handle the orchestration and execution of the tests themselves.

Framework-Agnostic Execution

Testkube supports any testing tool including Playwright, Cypress, k6, Postman, JUnit, Artillery, or custom scripts. Each tool runs in its own container with isolated dependencies. Teams aren't locked into specific frameworks - they use the tools that fit their needs while Testkube provides unified orchestration.

Frontend teams use Playwright. Backend teams use Postman. Performance engineers use k6. All tests execute through the same orchestration layer with consistent observability.

Parallel Test Execution

Testkube automatically shards and parallelizes tests across multiple pods. A 1000-test suite can run as 10 pods executing 100 tests each, or 100 pods executing 10 tests each - fully configurable based on your requirements, test characteristics and cluster capacity. Execution time scales with available resources, not runner count.

Centralized Test Observability

Test Insights aggregates results from all test executions into a unified dashboard -regardless of trigger source, execution location, or testing framework. Logs, artifacts, metrics, and resource consumption appear in one place.

AI-powered analysis examines test logs to identify patterns and suggest root causes for failures. Compare logs between runs to spot what changed. Correlate test failures with Kubernetes events to understand if infrastructure issues caused problems rather than code defects.

CI/CD Agnostic Design

Testkube works with any CI/CD tool. Pipelines trigger tests via API calls or CLI commands but don't manage execution. This means switching CI/CD platforms doesn't require rebuilding test infrastructure.

For teams running multiple CI/CD tools across different projects, Testkube provides consistent test orchestration regardless of which pipeline triggered the execution.

The Foundation for Modern Continuous Quality

Decoupled testing not only solves the problems of pipeline bottlenecks, instead it builds the foundation for advanced continuous quality patterns. With this foundation, approaches like continuous validation pipelines and AI-powered testing can be implemented easily and won’t be constrained by the limitations of pipeline-bound testing.

The Architectural Stack

Kubernetes - Platform Layer

Provides elastic compute, event-driven orchestration, and declarative infrastructure management. The foundation for cloud-native applications.

Decoupled Testing Infrastructure - Foundation Layer

Tests execute as Kubernetes workloads, independent of CI/CD pipelines. Event-driven triggering responds to deployments, config changes, and infrastructure events - not just code commits. Centralized observability aggregates results regardless of trigger source.

Continuous Validation Pipelines - Process Layer

Multi-stage validation across the entire SDLC: pre-commit checks, pull request gates, post-deployment verification, and production monitoring. Continuous validation requires tests that trigger from any stage, not just CI/CD gates. Decoupled testing enables validation anywhere in the development lifecycle, making continuous quality practical across all environments.

AI-Powered Testing - Innovation Layer

Testkube AI agents dynamically generate, execute, and debug tests through programmatic orchestration. Testkube's MCP Server integration exposes testing as tools that external AI tools and agents can invoke - creating Test Workflows, triggering execution, analysing failures, and iterating based on results. This addresses the AI velocity paradox: AI generates code 5-10x faster, but validation must keep pace.

Without decoupled testing, continuous validation remains restricted to pipeline stages. Without independent test execution, AI agents can't dynamically orchestrate workflows. The foundation determines what's architecturally possible at higher layers.

Conclusion: Rethinking Testing Infrastructure

Pipeline-bound testing creates bottlenecks that compound with scale: serial execution, resource contention, limited triggering, fragmented visibility.

Decoupled testing removes the constraint by treating tests as Kubernetes infrastructure. Tests execute independently, trigger from any source, scale elastically, and centralize observability. This architecture also enables continuous validation across all environments and AI-powered testing workflows.

Want to explore how this works for your team? Schedule a demo and we'll show you Kubernetes-native testing tailored to your infrastructure.

About Testkube

Testkube is the open testing platform for AI-driven engineering teams. It runs tests directly in your Kubernetes clusters, works with any CI/CD system, and supports every testing tool your team uses. By removing CI/CD bottlenecks, Testkube helps teams ship faster with confidence.

Get Started with a trial to see Testkube in action.